- Crypto safety agency, Ledger, has introduced it’ll be getting into the AI safety market with plans to launch a set of recent AI-focussed tech — together with new {hardware} gadgets supposed for use with AI brokers — all through 2026.

- Ledger mentioned software-based safety is inadequate to guard customers as extra delicate information is being shared with AI brokers and the brokers themselves are quickly turning into extra highly effective.

{Hardware} that helps defend you from rogue AI brokers will quickly be launched by French crypto safety agency, Ledger, which introduced it’s set to enter the artificial intelligence (AI) safety house by releasing a spread of recent applied sciences.

In a blog post written by the agency’s Chief Human Company Officer, Ian C. Rogers, printed April 14, Ledger outlined merchandise designed to make sure AI brokers stay safe and their behaviour aligned with human intentions.

Rogers argued that software-based safety is inadequate given AI brokers’ entry to delicate data and their more and more highly effective capabilities. “Ledger is launching a complete safety stack for AI Brokers all through 2026,” he mentioned.

Whereas AI brokers want entry to cash, credentials, and id to be helpful, software-only safety is inadequate for production-grade danger.

Ledger mentioned it could strengthen AI brokers’ trustworthiness by introducing “hardware-anchored safety to the agentic financial system.” The brand new gadgets would perform very like Ledger’s present crypto {hardware} options, storing delicate data on the system and requiring bodily button presses to execute sure duties.

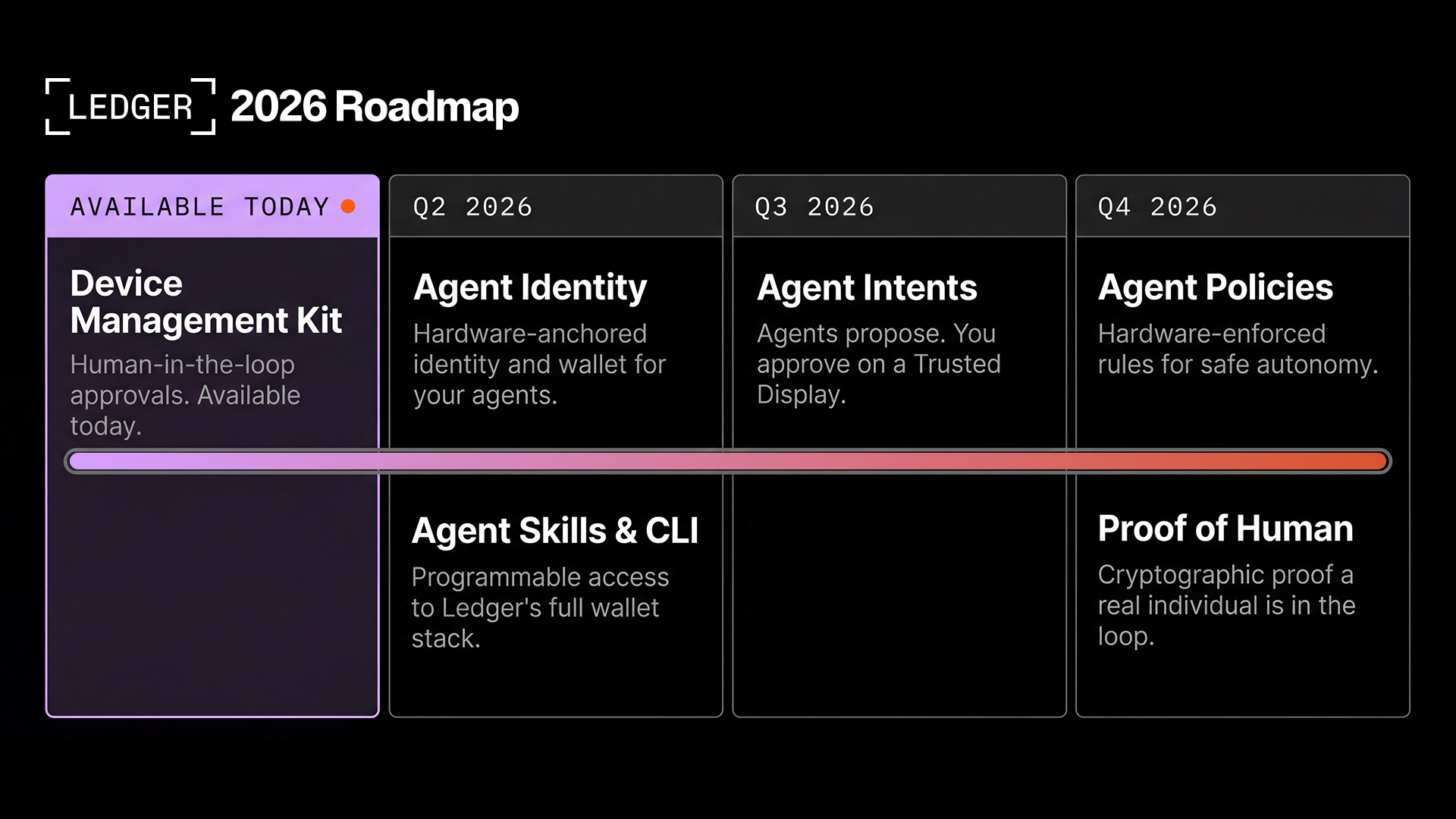

Ledger’s suite of AI agent safety applied sciences to roll out in 2026 will embody:

- Machine administration package (DMK) which has already been launched, and is being utilized by Moonpay.

- {Hardware}-anchored id and pockets for AI brokers, together with an agent command line interface, will roll out in Q2.

- Brokers Intents, which Ledger refers to as a “human-in-the-loop approval layer” for brokers, to roll out in Q3.

- Agent Insurance policies, permitting people to make use of the {hardware} system implement guidelines on brokers (corresponding to sending limits), coming in This autumn.

- Proof of Human, permitting the human behind AI brokers to show their id, due in This autumn.

Associated: Japanese Giants Unite to Build the Brain Behind the World’s Machines

Why Is {Hardware} Primarily based Safety Mandatory for AI Brokers?

Rogers warned that “an agentic future is coming, however an agentic future the place we give brokers our logins, bank cards, and identities is a safety nightmare.”

As Ledger sees it, offering our most delicate data to highly effective AI brokers on our behalf is a dangerous proposition the place outcomes may very well be very dangerous for people if these brokers take motion the human didn’t intend.

Associated: Ledger Integrates OKX DEX to Enable In-App Multichain Token Swaps

Rogers mentioned Ledger’s elementary goal as a enterprise derives from its perception that “digital non-public property is actual, and you’ve got the proper to personal and management it.” This perception has knowledgeable their method to crypto safety and it’s now the idea of their entry into AI safety.

“Your brokers will maintain your API keys, your credentials, your id, and your cash,” he mentioned.

We consider possession and management should be grounded in {hardware}. A safe component doesn’t care if the encircling software program is compromised. The signing boundary nonetheless holds. Human approval nonetheless holds.

Ian C. Rogers, Ledger

Ian C. Rogers, Ledger The put up Ledger Targets AI Agent Risks With Hardware-Based Security and Human Controls appeared first on Crypto News Australia.